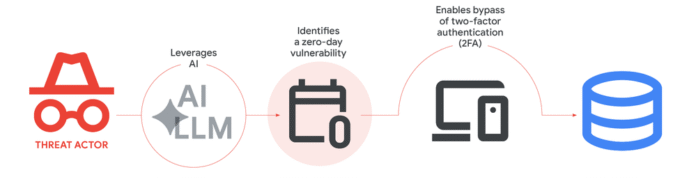

Google just confirmed the first real-world case of hackers using AI to build a working zero-day exploit — one designed to slip past two-factor authentication on a widely used admin tool. The discovery, published Monday by Google’s Threat Intelligence Group, lands in a year where state-backed crews from China and North Korea are already feeding AI models security flaws to weaponize. Here’s what happened, why the exploit got caught, and what it means for the apps and accounts you log into every day.

What Google actually found

Google’s Threat Intelligence Group (GTIG) said a prominent cybercrime group built an AI-built 2FA bypass exploit aimed at a popular open-source web-based administration tool.

Google won’t name the tool, but says it patched the flaw with the vendor before the attackers could launch what they had planned as a mass exploitation campaign — a quieter outcome than recent open-source disasters like the Linux kernel vulnerability called Dirty Frag, which leaked into the wild with no patch ready.

The exploit was written in Python, and once Google’s analysts opened it up, the AI fingerprints were hard to miss. The code had textbook formatting, neatly written help menus, and a hallucinated CVSS severity score — the kind of clean, over-documented output you only see when a large language model (LLM) writes the first draft.

Google says it has “high confidence” that an AI model helped find and weaponize the bug, while ruling out its own Gemini and Anthropic’s Mythos.

Why a 2FA bypass matters to you

Two-factor authentication (2FA) is the second login step — the six-digit code, the prompt on your phone, the security key — that’s supposed to keep someone out of your account even if they’ve stolen your password. If a hacker can bypass 2FA, they don’t need to phish you or guess anything. They just walk in.

The catch here is that the target was an admin tool, not a consumer app. But the bigger story is the method. We’re now at the point where AI can read code the way a security engineer would and spot logic flaws — places where the software trusts something it shouldn’t.

As Google’s report put it, AI can surface dormant logic errors that appear functionally correct to traditional scanners but are strategically broken from a security perspective.

“The tip of the iceberg”

John Hultquist, GTIG’s chief analyst, isn’t sugar-coating where this goes next. In a LinkedIn post on Monday, he wrote, “Frankly, the details of this event are not as important as the evidence that the era of adversary use is here.”

He told CyberScoop the same thing in plainer terms: “We finally uncovered some evidence this is happening. This is probably the tip of the iceberg, and it’s certainly not going to be the last.”

What that means in practice: we don’t get to treat AI-built exploits as a future problem anymore. They’re being built now.

Google’s report also flags North Korean group APT45 sending thousands of repetitive prompts to AI models to validate proof-of-concept exploits, and an alleged China-linked group jailbreaking Gemini using fake security researcher personas to dig up flaws in TP-Link routers.

That China angle isn’t only about exploiting code. The same AI tools now being used by defenders to map China’s grip on the US military supply chain are the mirror image of what offensive teams are doing — read code, find patterns, automate the boring part.

The race we’re watching isn’t AI vs. humans; it’s AI vs. AI, with humans deciding which side gets the budget.

AI is the target too, not just the weapon

There’s a second layer to this story that’s easy to miss. AI models aren’t only being used to build attacks — they’re being attacked themselves. A few months ago, attackers used prompt injection to drain nearly $200K from Grok-related accounts, manipulating the model’s own instructions to do something it was never meant to do. That’s the same trick GTIG saw the China-linked group use to push Gemini past its safety guardrails.

Prompt injection is the AI version of social engineering. Instead of tricking a human into clicking a bad link, the attacker tricks the model into ignoring its own rules — usually by hiding instructions inside something the model is asked to read.

If you’re using an AI assistant that browses the web, summarizes emails, or runs code on your behalf, that’s a real exposure surface. The smarter the agent gets, the more interesting it is to attackers.

The pattern looks familiar

We’ve spent the past few days covering stories that all rhyme with this one — including a breach at education tech giant Instructure that exposed Canvas data for millions of students.

None of those incidents used AI to build the actual bug, but they all sit in the same trend Google’s report is describing: faster discovery, bigger reach, and less time between “researchers found this” and “someone is using it.”

The good news? The same AI that finds flaws can also fix them. Google’s own Big Sleep agent has been finding zero-days for defenders since late 2025, and Mozilla wrote in April about vertigo from how many bugs its defensive AI tools now surface in a single run. The hard part is which side moves faster. Right now, we’re watching both sides scale up at once.

What you can actually do

You’re not patching kernel modules or running threat intelligence — and that’s fine. The takeaways for the rest of us are smaller and more boring than the headline suggests:

- Keep 2FA on. Even with this exploit, hackers still prefer targets where 2FA is the only thing left between them and full access. Turning it off makes you easier, not safer.

- Update apps when they ask. The vendor in Google’s report patched the flaw fast, but that only protects users who actually install the fix.

- Stay skeptical of “official-looking” downloads, especially on Android — the recent wave of fake call history apps that hit 7.3 million Play Store downloads is the version of this story aimed at regular phones.

- Be careful what you paste into AI tools. If you’re using a chatbot or AI agent for work, don’t feed it credentials, API keys, or anything you wouldn’t post publicly. Prompt injection works both ways.

The post Hackers Used AI To Build a Google 2FA Bypass Exploit appeared first on Memeburn.